In the rapidly evolving field of artificial intelligence, one should be cautious before praising any technology company too enthusiastically. Corporations operate under powerful financial incentives, investor expectations, and government pressures. A position that appears principled today can sometimes shift tomorrow when circumstances change.

At the same time, when a company appears willing to bear real cost in order to uphold publicly stated ethical boundaries, that moment deserves acknowledgment. Recently, the AI company Anthropic found itself at the center of such a moment when it refused to weaken certain surveillance safeguards embedded in its artificial intelligence systems.

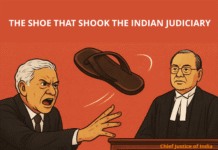

Reports indicate that Anthropic declined requests from the U.S. Department of Defense to modify safeguards that prevent its AI models from being used for mass domestic surveillance and fully autonomous weapons systems. The Pentagon reportedly sought broader contractual language that would allow “any lawful use” of the technology.

Commendably, Anthropic chose not to accept those terms. I believe it knew the consequences.

The disagreement escalated quickly. U.S. defense authorities subsequently designated the company a “supply chain risk,” effectively restricting the use of Anthropic’s technology within defense infrastructure. Such a designation carries serious implications for any technology firm, particularly in an era when government contracts in artificial intelligence can be worth hundreds of millions of dollars.

The disagreement escalated quickly. U.S. defense authorities subsequently designated the company a “supply chain risk,” effectively restricting the use of Anthropic’s technology within defense infrastructure. Such a designation carries serious implications for any technology firm, particularly in an era when government contracts in artificial intelligence can be worth hundreds of millions of dollars.

Whether one agrees with every aspect of its policies or not, the company’s refusal to dilute its surveillance safeguards, even under significant pressure, deserves recognition. It suggests that at least in this instance, stated principles were not immediately traded for financial advantage.

This episode also illustrates a broader reality about the artificial intelligence industry. When one company declines a particular path, another is usually prepared to move forward.

Following the dispute, companies associated with the ecosystem around Microsoft and others continued expanding cooperation with OpenAI, whose systems—including those behind ChatGPT—are increasingly integrated into government and enterprise environments. In a competitive technology market, such developments are not surprising. Governments will continue seeking partnerships with firms willing to deploy AI systems within security and defense frameworks.

However, the public reaction to these developments revealed something interesting.

Many users began questioning how far artificial intelligence should extend into surveillance and military infrastructure. Online discussions among developers and technology observers reflected growing unease about the possibility that powerful AI systems could become tools for large-scale monitoring or autonomous weaponization.

Market behavior seemed to reflect that concern. During the controversy, Anthropic’s chatbot Claude experienced a noticeable surge in interest and downloads. In some app rankings in the United States, Claude temporarily surpassed ChatGPT in popularity.

Technology markets move quickly, and public attention often shifts just as rapidly. Still, the episode demonstrates that users are not entirely indifferent to how artificial intelligence is deployed. Trust and integrity can influence public choices.

Yet it would be unwise to interpret this moment as proof that any single technology company deserves unconditional trust.

Technology companies are commercial entities, not philosophical institutions although they often boast about their “philosophy”. Their decisions are shaped by a combination of values, reputation, incentives, competitive pressures, and geopolitical realities. History repeatedly shows that corporate commitments can evolve when circumstances change.

For that reason, the real safeguard does not lie in assuming that one company will always act more responsibly than another. The real safeguard lies in sustained public scrutiny.

When users, journalists, technologists, social watchdogs and policymakers remain attentive to how artificial intelligence is deployed, companies face stronger incentives to maintain the ethical boundaries they publicly endorse. Public attention creates accountability. Without it, even well-intentioned safeguards can erode over time.

Artificial intelligence will increasingly influence surveillance capabilities, national security infrastructure, and the digital lives of billions of people. Decisions made today will shape norms and expectations for decades.

Within that broader context, Anthropic’s refusal to compromise on surveillance safeguards represents a noteworthy moment in the ongoing AI ethics debate. Not because any corporation should be elevated as a moral authority. But because, at least on this occasion, a company drew a line and accepted the consequences of doing so.

In an age when technological capability often advances faster than ethical reflection, the act of drawing such a line deserves acknowledgment. While appreciating the many technological discoveries of modern society and encouraging their use in the service of the Supreme Lord, the founder-acharya of ISKCON, Śrīla A. C. Bhaktivedanta Swami Prabhupāda, clearly warned about the consequences of neglecting higher principles. In his commentary on the Śrīmad-Bhāgavatam (7.9.43), he writes:

“The so-called scientists and philosophers are simply misleading the people because they do not know the ultimate goal of life.”

The observation remains strikingly relevant today, when technological capability often advances faster than ethical reflection.